As countries around the world face an unprecedented health crisis and domestic economic downturns, many are also becoming more inward-looking. Under these circumstances, it becomes even more essential to ensure that each pound, dollar, and peso spent on development contributes to saving lives and improving people’s welfare. Any money spent without critically diagnosing the root causes of the development problem and without drawing on evidence of effectiveness is potentially a missed opportunity to do so.

International Initiative for Impact Evaluation, or 3ie, an international NGO dedicated to improving lives sustainably in low- and middle-income countries through evidence-informed decision-making, is committed to generating high-quality, relevant research evidence and promoting its use.

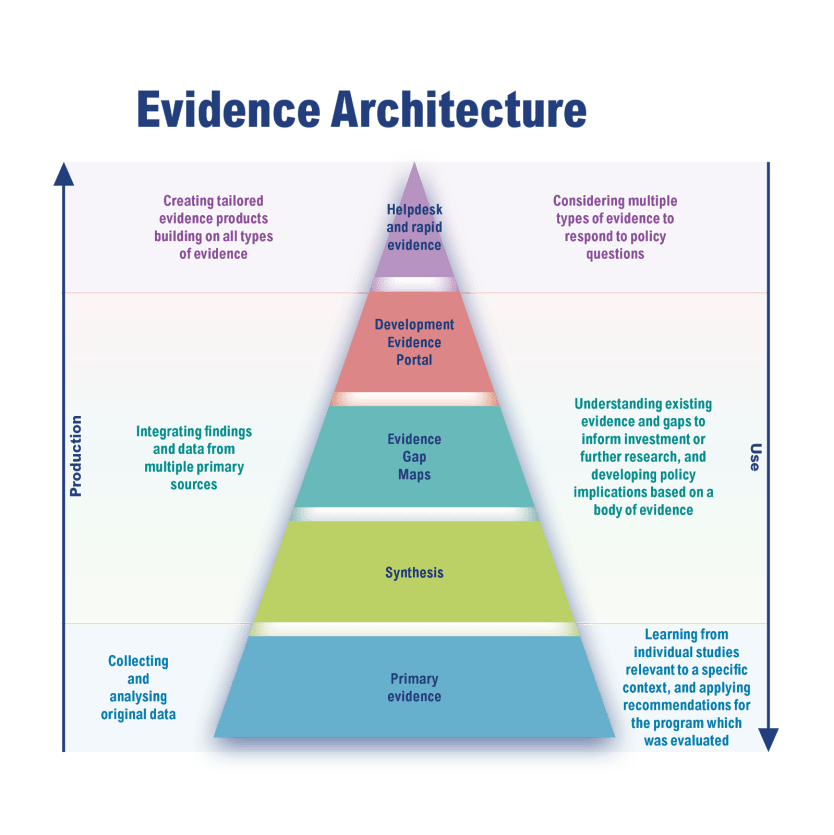

We work along all steps in the cycle of evidence production and use, which we conceptualize as the global evidence pyramid. At the base of the pyramid, we find theory-based, mixed-method impact evaluations that research the effectiveness and cost-effectiveness of development interventions and help identify the circumstances that a development intervention can be most effective under. The lessons from individual studies are most relevant to a specific context, and the ensuing recommendations apply mainly to the program that was evaluated.

At the next levels up, we integrate findings from multiple primary and secondary sources of development effectiveness research. This helps in understanding existing evidence and gaps to inform investment or further research and in developing policy recommendations based on a body of evidence.

At the tip of the pyramid, all of this is brought together in a searchable platform, the Development Evidence Portal, which allows us to curate knowledge and provide bespoke guidance notes and policy briefs to inform policy and programming decisions at distinct levels.

While DEP makes curated evidence available at one click, we recognize that promoting use requires more than making quality evidence easily available. “You can lead the horse to water, but you can’t make it drink,” according to the old proverb.

With this in mind, we decided to ply our trade, monitor the horse’s water intake, and assess what caused it. Between 2015 and 2020, we used contribution tracing to assess whether and how 3ie-supported research contributes to discussions and decisions among government implementers and other actors. Tracking over 170 evidence-use stories from 102 3ie-supported studies, our new evidence impact summaries portal highlights 50 of these stories, and this is what we learned from them:

1. Select the right questions

In almost all cases, we found that what mattered was choosing relevant research questions. Be it program impact evaluation, a systematic review, a rapid evidence assessment, or an evidence map, we encourage research teams to work with program implementers and decision-makers to understand the context and choose relevant questions — even if these may not be the easiest to address with the latest methodological techniques that maximize the chances of scholarly publications.

2. Maintain dialogue with stakeholders who might find the research evidence useful

Researchers, implementers, and decision-makers engaging and partnering can build mutual understanding and improve the use of evidence. While the use of research may depend on a constellation of factors, and even happenstance, improving the chances that research influences action or thinking requires investment.

A dialogue between researchers, decision-makers, and other actors can help with that. Maintaining an ongoing dialogue improves the chances of generating findings that help address relevant and urgent policy and implementation challenges. It also helps to take evidence to the users at the time that they need it and in a format that is actionable and understandable.

3. Institutionalize evidence-use systems in government to ensure improved development impact

About 3ie

The International Initiative for Impact Evaluation is a leader in supporting the production and use of high-quality evidence about what works, for whom, why, and at what cost in low- and middle-income countries.

• We synthesize and map studies to distill evidence, identify research gaps, and provide data-driven insights for policy and programming across sectors.

• Learn how we partner with country governments to strengthen evaluation capacity, such as in our West Africa Capacity-building and Impact Evaluation — or WACIE — program.

• Check out evidence impact summaries that capture evidence use from 3ie-supported research, and engage in our yearlong conversation on evidence impact.

For the latest updates on 3ie’s work, subscribe here.

In 3ie’s early days, researchers conducted studies with support from government agencies but did not always address the latter’s needs and priorities. Where governments have strong systems championing evidence-informed decision-making, though, they can ensure that the research works for them.

In the Philippines, for example, the National Economic and Development Authority — the government’s premier planning agency — steered a program to strengthen the culture of evidence use in the Philippines government.

The Philippines Evidence Programme, a partnership between the Australian Department of Foreign Affairs and Trade, 3ie, and NEDA, supported studies evaluating major governmental service delivery schemes and reforms. The second phase of the partnership aims to advance and embed the government’s national evaluation policy framework.

Findings from the studies are now feeding into decision-making. For instance, as part of the program, the Philippines Department of Labor and Employment commissioned impact and process evaluations of its popular Special Program for Employment of Students. DOLE facilitated and closely supervised the study through a steering committee.

This buy-in helped the researchers present findings and implications to the regional and municipal officials who implement SPES. The study findings showed that SPES had no impact on enrolment and emphasized the need for strengthening the skills and employability for the participating youths.

Since then, DOLE has acted upon some of the study recommendations. SPES’ focus was shifted from education to employment, and program guidelines now include life skills training. 3ie’s other country evidence partnerships have seen similar successes in delivering relevant evidence and strengthening its use in decision-making. In the case of Uganda, the office of the prime minister partnered with 3ie. At the end of four years, the partnership had worked with several departments and contributed to government efficiency and effectiveness.

A recent initiative born of necessity and demand during the COVID-19 pandemic is the WACIE Helpdesk which is a partnership between 3ie and IDinsight. It provides rapid evidence synthesis and translation to help policymakers in West Africa answer critical and urgent policy questions and design effective and cost-efficient development programs. While it is too early to conclude what use has been made of the rapid evidence briefs, the interest in the WACIE Helpdesk approach is very promising.

The international evidence community has come a long way in understanding what it takes to make critical thinking and evidence an integral part of policies and programs. More can be done to create incentives for policymakers and development institutions to take advantage of this offering.