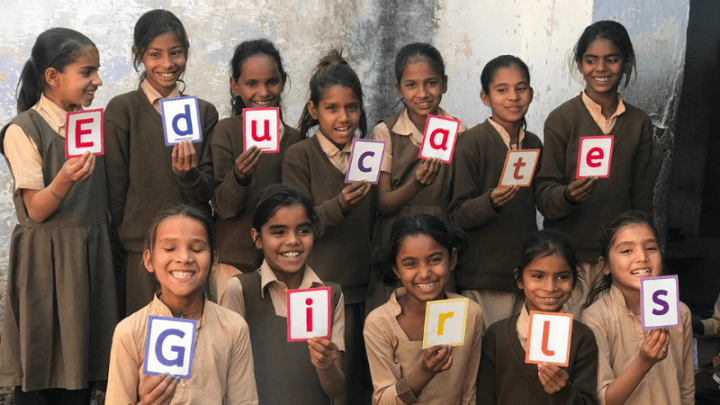

WASHINGTON — The first year was a scramble to get the program up and running, year two was full of stops and starts — it wasn’t until the third and final year of the Educate Girls’ development impact bond that things clicked into place. But even with a two-year ramp-up to smooth operations, the impact bond outperformed the goals set.

The Educate Girls DIB, which kicked off in 2015, was one of the first development impact bonds launched. The new model was the result of some in the aid community looking to find new financing tools that could reduce risk to donors and have the potential to bring in private investors. The results, released Friday, are sure to set off a conversation about the funding mechanism, how it works, and what can be learned about the burgeoning field. But there are limits on how much can be extrapolated from this single example.

The Educate Girls DIB had not been on track to meet the bond’s goals, but made such progress in year three that it managed to significantly exceed them. The organization achieved 160 percent of the learning gains that it had targeted, helping thousands of children in public schools in Rajasthan, India, improve their education levels, data released by the organization shows.

The Educate Girls bond is a results-based financing mechanism, through which the UBS Optimus Foundation provided upfront funding for a program angling to get more girls in school and improve overall educational attainment in 140 communities in Rajasthan.

The Children’s Investment Fund Foundation — as the outcome payer — has repaid UBS’ investment, plus a 15 percent return as the agreed-upon targets were surpassed. Educate Girls also received a bonus incentive payment for the success. UBS Optimus invested about $270,000, with evaluation costs nearly matching that number. The total costs, including management expenses and investments around the public sharing of results, reached about $1 million.

Since the Educate Girls bond launched, there are now some half-dozen development impact bonds underway, with others in the pipeline. But when it started, back in 2015, it was paving the way for a nascent financing tool.

“We did this as a sort of proof of concept, and we’re so excited that the DIB as a new financing tool can really be used to support and scale innovative solutions that can demonstrate results,” said Grethe Petersen, CIFF’s director of strategic engagement and communications.

Now that the time-consuming and sometimes challenging three-year journey has come to an end, those involved commend the work, but are also quick to warn that DIBs are not the right tool to fund all development challenges.

And there are skeptics as well. Justin Sandefur, senior fellow at the Center for Global Development said that he’s not overwhelmed by the results or the importance of this DIB, though he thinks it’s an interesting pilot.

Sandefur said that it is hard to tell whether the learning gains are significant. While they seem “reasonably comparable to other programs, as they note,” it “doesn’t sound revolutionary for 3 years,” he told Devex in an email. The gains appear to be solid, but “hardly unprecedented for a pilot” he said, noting several examples of organizations showing bigger learning gains.

UBS, CIFF, Educate Girls, along with Instiglio, which managed the DIB, and IDinsight, the independent evaluator, all took lessons away from the experience — from the size of the intervention, to the lead time needed, to how to manage expectations.

One often under-discussed impact, several commentators said, was the way a DIB can help transform a culture and a myriad of processes at the implementing organization. It’s also a new paradigm for some funders, who are used to being in the driver’s seat and having more say on how a program is carried out.

While this DIB gives some insights into how the instrument might be used, it also highlights key challenges, including the high costs involved in managing and evaluating them. And it only begins to answer questions about how well they might work and in what circumstances.

How it worked

Before starting the program, Educate Girls carried out a census-like, door-to-door survey to create a list of out-of-school girls in 34,000 households. The survey identified 837 out-of-school girls in the communities the DIB was serving and aimed to get 79 percent of all out of school girls enrolled.

The DIB overall aimed to impact 18,000 children, about 9,000 of them girls, by providing improved educational gains or getting students enrolled.

Early on, the DIB had to evaluate the education level of students in schools they would be working in. A team of about 160 community volunteers was deployed to both get more girls in school and supplement lessons in the deeply under-resourced government schools in which the DIB was being implemented.

The schools, often little more than a mat on the floor and a blackboard with chalk, mostly lack electricity, or desks and chairs. But through the program, about three times a week, an educated person from the local village comes in to teach English, Hindi, and mathematics through songs, activities, and games that help students gain critical reading and writing skills.

In year one, Educate Girls had to hire and train staff rapidly and spent the year building a performance management system. But the constrained timelines — the DIB was finalized close to the start of a new school year — meant there wasn’t enough time for a typical startup process for a new district. Based on what it learned in year one, Educate Girls spent year two building new learning content. Safeena Husain, founder and executive director of Educate Girls, called it “a development year,” in which staff was also getting used to data.

In year three, “everything kind of fell into place,” she said, with new materials in hand and more staff understanding how to work with the near real-time data it was collecting. The program was able to identify problem areas and address them rapidly.

“Year one and two we weren’t getting there, there was so much focus on what to do better, that rigor was the biggest learning for us,” Husain said.

During the course of the DIB, Educate Girls changed its classroom management techniques, content, training, assessments, and the community mobilization process.

On the data front, in year three, frontline staff were looking at data and using it to change their monthly and weekly plans. This enabled staff to shift resources and make decisions based on areas that may need more attention than others, rather than simply allocating resources equally across the communities it works in.

Results and measurement

Simply put, Educate Girls surpassed the targets set by the DIB in both learning gains and getting out-of-school girls enrolled.

More than 7,000 students who studied in the Educate Girls programs gained 8,940 ASER learning levels — the annual survey that aims to provide reliable estimates of children’s enrollment and basic learning levels for each district and state in India — more than those in the control village and 160 percent of the target.

The learning gains for students in the Educate Girls schools were 28 percent higher than the learning gains for students in the control schools. Year three gains accounted for more than 6,000 of the overall learning level gains, while years one and two hovered at about 1,400. That translates to students on average gaining 1.08 ASER levels more than those in the control group — a jump linked to, for example, moving from words to paragraphs.

The ASER standard allowed the DIB to set targets against other successful education programs, and the results put it in the upper end of the outcomes spectrum when compared with other impactful education interventions, said Neil Buddy Shah, chief executive officer and founding partner at IDinsight.

At the end of the DIB’s third year, Educate Girls had enrolled 92 percent of the 837 eligible out-of-school girls in the villages, 116 percent of the target. IDInsight, the independent evaluator did not conduct a household census in the control group, so the enrollment estimates don’t show a causal effect of the programs.

In this case, with an NGO supplementing government schools, there are a number of different factors that could lead to learning changes, Shah said. A randomized controlled trial allows the results to be attributed to the work of Educate Girls, untangling a set of complicated factors so that the outcome payer can be confident the results they are paying for came from the organization’s work, he said.

It was also designed to try to prevent perverse incentives — for example, Educate Girls sending home underperforming students in order to skew the data more positively and hit the target. For example, IDinsight worked to track down students who were not at school and visited them at home, sometimes multiple times.

Students who could not be found, and therefore had no final or endline scores, were not included in the analysis, which raises some concerns about overestimating the impact. IDinsight acknowledged the risk and did the repeated home visits in an effort to mitigate that risk. The result was that 4 percent of students had no endline score, due largely to students moving or declining to participate.

“It’s super interesting to see the hypothesis of impact bonds, at least partly, really play out in this project,” said Avnish Gungadurdoss, co-founder and managing partner of Instiglio, which managed the DIB.

“We were surprised by the third-year results, but if you look at the trajectory of Educate Girls and improvements and capacity, you start understanding why year three results are so much better,” Gungadurdoss said.

That’s in part because the process of managing for results — of building and operating performance management systems — takes time. Instiglio underestimated the time and support it would take to help the team understand and use data and set up internal systems to manage performance, Gungadurdoss said.

Educate Girls did increase the intensity of the intervention and its strategy in year three, in part by switching to a group approach that put children of similar levels together; implementing an improved curriculum; adding sessions during holidays; more home visits overall including more home visits to reach students who were frequently absent; and more staff per student, he added.

Educate Girls also hired some additional personnel — mainly floating field coordinators — and may have spent a bit more in year three, but the gains were made without a significant increase in funding, according to Educate Girls.

What implementers would have done differently

This DIB was a learning process, and there’s more than one thing those involved would change if they could do it over again — ranging from the size of the investment, to scale-up time, to how progress was tracked.

Timing was a key issue in year one. The consensus seemed that there should have been more time for Educate Girls to prepare to launch the DIB, which would allow them to build up staff, set up performance management systems, and carry out authentic baseline surveys so that they had accurate primary data, Educate Girls’ Husain said.

In this case, they relied on problematic secondary data for the baseline surveys, and they had to renegotiate targets and pricing when they discovered the data they used was unreliable.

Better alignment and understanding of the evaluation process also would have helped, Husain said. In the second year, Educate Girls didn’t know IDinsight would do home visits — and the results on learning performance took a hit because of girls tested at home. Yet, this data positively impacted the work, prompting Educate Girls to change the program in order to follow up with the girls, ensuring participation and providing extra support.

Reducing assessment time would also have helped — about a month of teaching time was lost each year for assessment days, Husain said.

The contract, despite their best efforts, didn’t include language about how to deal with unforeseen challenges — from that inaccurate baseline data, or a school shutting suddenly, which was problematic, CIFF’s Petersen said.

Instiglio’s Gungadurdoss said he would have put more process evaluations in place to show how the performance management model was working and track how the organization evolved over time.

Developing more of an understanding of field officer jobs early on in the stakeholder conversations, particularly for CIFF and UBS, would have been useful, he added.

Lessons learned

While several of these organizations have gone on to work on other DIBs, applying some of the lessons they’ve learned in the process, there are also some bigger picture takeaways: From how the culture at Educate Girls was transformed, to how it forced CIFF to have a new mindset.

While Educate Girls was already using data, and had a randomized controlled trial done before the DIB, it had a lot to learn in terms of managing data and using it to improve programs. The DIB required employees throughout the organization to think differently, which was possible in part because having clearly defined targets helped build ownership, not only on the ground but throughout the support team, Husain said.

“We talk about the use of data in the development sector — when you have clearly defined results, then data-driven decision-making becomes easier and can help you accelerate and amplify,” she said.

Educate Girls was able to use that data to adjust the program, change the curriculum, adapt training, and divert resources and staff where they were most needed, Husain said.

The DIB didn’t just mean changes for Educate Girls, it also forced a new mindset for CIFF, said Petersen. A DIB’s design is supposed to leave the innovation at the intervention level, giving the ability to innovate and adjust, rather than dictating what should be done, she added.

It was different to how CIFF does its grantmaking, the fund is usually quite involved in helping to find solutions. The DIB’s process taught CIFF that there is a lot of good innovation “happening closest to the ground,” Petersen said. The organization is now eager to support local and national organizations closest to beneficiaries and allow its grantees the ability to innovate and be agile in their programming, she said.

“From an impact perspective, which is our goal, it has been very successful,” said Phyllis Costanza, CEO of the UBS Optimus Foundation, adding that with a 15 percent internal rate of return, it was also positive from a financial perspective.

“We did this because we really wanted to test if this could work in a development setting and we wanted to learn lessons from this — and from that perspective it’s been an incredible learning experience,” she added.

For Costanza, there were a few lessons. First, ensuring the starting point is right so that the instrument and outcomes are developed properly, and another is trying to simplify the evaluation — in part because randomized controlled trials are expensive.

The upfront cost to realize this DIB was high, Costanza said, but the foundation was cautious, looking to prove the concept before investing more. UBS Optimus Foundation is already involved in another DIB, and working on others, all of which involve larger investments.

Husain echoed the sentiments, saying that the expense and high transaction cost mean DIBs are only a right fit in specific situations.

“A DIB is a really really good [research and development] tool,” Husain said, adding that she would consider one in the future if Educate Girls was looking to scale a new program, but that it wouldn’t make sense for the same intervention. Husain would like to see donors innovate their grant funding mechanisms instead, to allow for the kind of creativity and adaptation that the DIB did, she added.

“There was a lot learned benefiting the organization and the children we serve for years to come,” Husain said.

What it means

Just because this single and admittedly small pilot with high administrative and measurement costs showed results, doesn’t mean that everyone should jump into creating DIBs.

“There is a danger of people writing about it saying this is proof DIBs work, we need to be careful,” Gungadurdoss cautioned.

It worked for this organization and these education targets, but it’s critical to see how other organizations will replicate it, he said, adding that over time some organizations may be more or less responsive to the model.

This DIB was also a small project with a relatively small amount of money invested, where management and evaluation costs dwarfed the sum invested in carrying out the work on the ground. Costanza said if she had to do it again, she would have invested more, maybe $1 million.

The ratio of evaluation cost to investment was high, and not sustainable in the long run, Shah admitted. But, Shah said, it was reasonably priced given that it required multiple field visits and sometimes repeated efforts to track down students. He added that a similar evaluation would be used to measure a much larger investment, without necessarily incurring a much higher cost.

Sandefur said that cost calculation for the DIB should include all the expenses, and should be part of the cost-benefit ratio, so that it accurately reflects the total expenditure for the results, which the final evaluation does not do. It will also help people to better understand how DIBs work to have the breakdown of the costs each partner spent, he added.

“One thing you should take away from this DIB is that decision-making, prescribing what happens next, should be as close to the ground as possible.”

— Safeena Husain, founder and executive director of Educate GirlsThe stakes have been high, and it has been an exhausting push for Educate Girls, Husain said. Her team will take some time off to reflect on what they learned and think about what DIB elements it will scale to the 29,000 schools it works in. Moving forward, Educate Girls will be looking for funders willing to give them results-based, multiyear funding that is flexible so they can continue to deliver, Husain said.

Educate Girls will remain in the DIB schools for three years, and will keep track of the control group, to evaluate changes over time. Indeed, the Educate Girls’ model is not to stay in communities forever. It approaches sustainability in three ways: It works to shift capacity to the community through local volunteer and village ownership; it stays until 90 percent of out-of-school girls are in school and long enough to create a mindset change in the community, and it typically stays in a place between six and nine years.

“One thing you should take away from this DIB is that decision-making, prescribing what happens next, should be as close to the ground as possible,” Husain said, adding that it is a mindset shift for the funding community.

While DIBs have the ability to incentivize implementers to improve reporting, use data better, and work based on results, those changes can also be achieved through different mechanisms. One of the key ideas behind DIBs was for them to be a mechanism to mobilize private capital.

“The real test will be when CIFF or UBS tries to do this with an actual investor who is in it for the money, and not just playing along to prove the concept,” Sandefur wrote.

“Or they try to do this with a government, and all the messiness of politics and legal challenges come into play. For now, I don’t really believe the innovative finance is doing the work — causing an indifferent implementer to push for better results. Instead, the organizational culture of the various philanthropic partners and NGOs is aligned and the money is a bit of a charade.”

It will take time to get investors on board, Costanza said, pointing to the many years it took private equity and venture capital to become new asset classes. There will need to be more study and there will have to be larger scale DIBs, in part to reduce the overall cost of performance management systems and measure the results.

“The goal is not to be [a] niche small player, but to achieve long-term and large-scale impact,” Costanza said. “There’s only two ways to do it really, through government or through the private sector, so the question is how can we engage capital markets.”

The goal is to make DIBs investable so that large financial institutions can take them on, and the UBS Optimus Foundation is looking at how to do that, she said. The investments have to be de-risked and because philanthropy doesn’t price in risk or track success carefully enough, there is a lack of transparency that limits the ability of the industry to price interventions.

“The fact is, now we are truly starting to factor in price in the risk of these projects, which is something our sector has never done,” Costanza said. “We pay on cost of delivery — have never factored in risk to pricing — and never priced accurately to begin with.”

DIBs can help shift the thinking and create a conversation around pricing risk. Donors who don’t want to assume risk have to pay a price to transfer that risk, Costanza said.

Sophie Edwards contributed reporting to this article.

Read more on development impact bonds:

► ICRC launches world's first Humanitarian Impact Bond